> CSDCA

- Common Scale Discrete Choice Analysis > Non-compensatory

Modeling and Simulation

> CSDCA

- Common Scale Discrete Choice Analysis > Non-compensatory

Modeling and Simulation  > CSDCA

- Common Scale Discrete Choice Analysis > Non-compensatory

Modeling and Simulation

> CSDCA

- Common Scale Discrete Choice Analysis > Non-compensatory

Modeling and Simulation

All things are subject to interpretation. Whichever interpretation prevails at a given time is a function of power and not truth.

Friedrich Nietzsche

Abstract

An implementation of a non-compensatory modeling and simulation of product choice probability is presented. The procedure relies on the thresholds (cutoffs) of acceptability, under which the product is rejected regardless of other properties. Computed perceptances that respect the thresholds do not suffer from taking extremely large values, often observed with the standard compensatory model. Simulation appears to be more realistic especially for situations when an individual must decide whether to accept or reject an offered product when alternatives are unavailable.

Analysis and simulation of choices from a survey is commonly based on assumption of additive contributions (part-worths) of individual attribute levels (the aspects of the product) to utility of the product. In this approach, a product is understood as a bundle of aspects. The formulation of utility of a product is equivalent to Cobb-Douglas utility function of the product aspects. While this approach has many good properties, it has also some less welcomed ones.

As aside

As aside

Marketing experts and psychologists have shown that a compensation of negative by positive aspects of a product does not correspond to the real behavior. A number of non-compensatory models have been proposed. Those cited most often are:

In all models, a product is rejected when a certain acceptability threshold is not exceeded. An unsatisfactory aspect cannot be compensated by another, even highly satisfactory one. Linked to a little satisfying aspect is the fact that growth of satisfaction with other aspects may not necessarily lead to a higher satisfaction with the product. Other properties are a matter of opinion, and are interpreted differently by different authors.

Market research studies rely on a limited number of respondents who are supposed to represent the potential target. Non-compensatory models are qualitative in nature, and would provide at most ranking of products in a scenario. Since ranks cannot be averaged, namely in small samples, pure non-compensatory models are not suitable for a simulation.

Additive utility models give quite a good correlation between the observed and predicted results. However, such comparisons were done using the results from the study, rather than confronting market data. From the response of our clients we know that the trends derived from the additive model are correct, but the calculated characteristics (e.g. shares) often substantially differ from those found in the real market. The main causes can be seen in the method of interviewing, neglect of respondents' non-compensatory reasoning, and the estimation and simulation model.

In the traditional CBC - Choice Based Conjoint, a respondent chooses the product out of several product profiles offered. If the product is CPG/FMCG, fast food or other goods requiring little involvement, the situation induced in the test is very similar to a real situation. Thresholds are hardly significant because usually several products exceed the acceptability thresholds. The compensatory model is appropriate. The situation is different in case of durable products or repeated and/or long-term services that require high involvement of the decision maker. Acceptability thresholds play an important role when people decide about higher than common daily expenses, whether for household equipment, rent, ownership, lease agreement, financial services, etc.

For the purpose of a quantitative preferential simulation, disjunctive model could have been excluded. If a subject were deciding by a single aspect, a sorting or sequential choice questions on the aspects would give the full answer. Any further analysis would be superfluous.

Conjunctive model can represent "satisficing", i.e. a way when the chosen product is the first product that exceeds thresholds in all important attributes. Such a product is therefore both sufficient and satisfying. The model is suggested for situations when searching for the optimal product would require additional costs (time, money, effort, etc.) for the decision maker. Because conjunctive model contains a large percentage of randomness, its pure form is not suitable for products being selected with a certain amount of caution. In this respect, lexicographical and EBA models suit better.

In lexicographical model, subject accepts and replaces products in their consideration set gradually, as evaluating products one by one. In EBA model, subject gradually eliminates the products until the final consideration set is left. Both models lead to the same consideration set of some size from which the final choice is made.

Non-compensatory models allow for ranking of products in a set. In a quantitative simulation, product characteristics must be continuous numeric and capable of averaging. Therefore, a hybrid model had to be developed.

The conjunctive model has been taken as the default non-compensatory model. A product can be accepted only if all its aspects are acceptable. When a product is understood as a bundle of aspects valued independently, the product acceptability is assumed as (not necessarily linearly) proportional to a weighted product of the aspect perceptances. In the simplest case, the weights are constant exponents. This choice has some important consequences in the space of positively perceived aspects.

When at least one of the aspects is negative, only the negative aspects are used for product perceptance estimation. This reflects the fact people mostly ignore positive aspects if some other aspects are the cause of the product refusal.

The basic questionnaire for obtaining data for analysis and prospective simulation is composed of sections described on page CSDCA - Common Scale Discrete Choice Analysis.

As aside

As aside

Choice sets in a CBC questionnaire are usually completed with the constant alternative "None of the offered" enabling refusal of any of the offered product profiles. It often seems to be an escape for respondents in place of giving an answer. We use the estimated value only for test and comparison reasons, but do not rely on it.

Perceptance of a product is a complex function of perception of product attributes. In our model it is estimated as a weighted product of attribute perceptances. It is positive if perceptances of all aspects are positive for the subject. If perceptance of any of the important attributes is zero or negative, the product is rejected and its perceptance is set zero. With this approach the problem of counterbalancing unacceptable levels with acceptable levels is avoided.

A product may have some relatively unimportant or insignificant attributes. The threshold for such an attribute is identified below all the attribute level part-worths, and the attribute cannot be cause of rejection of the product in a simulation. The model gives the answer about attribute importance in a way completely different from the standard compensatory model.For the most reliable indicator of a product to be successful on the market is usually an estimate of preferential share not accounting for the volatile constant alternative. To obtain such a value, a reasonable choice set must be created using competing products. A good indicator of acceptability independent from other items in a choice set should be some form of a stated acceptability. The perceptance of the product is a suitable candidate.

As aside

As aside

| Property | Compensatory model | Non-compensatory model |

|---|---|---|

| Attribute thresholds | Unknown | Initial values for thresholds are estimated in separate PRIORS tasks used as soft constraints in level part-worth estimation. They are is rectified using answers from a conjoint-like exercise |

| Level acceptability | Undefined | Level perceptance is based on estimated attribute threshold and is defined in the interval (-100%, 100%) to reveal either positive or negative attitudes to the level. The zero value corresponds to disinterest in the level. |

| Product acceptability | Acceptance is a relative measure related to the average product seen by respondents in the conjoint exercise. Comparison between exercises is mostly impossible | Product perceptance reflects thresholds of all important attributes. Under constant methodology conditions, it can be compared between different conjoint exercises or even studies. Unlike the level perceptance, it is not defined for negative values. |

| Additive nature of the model allows any attribute to outweigh all the other attributes | Non-additive nature of the model excludes outweighing of all the other important attributes | |

| Acceptance is controlled by all attributes indiscriminately | Perceptance is mainly controlled by perception of an important attribute with the lowest acceptability. This is a fundamental difference | |

| Questionnaire |

A standard CBC exercise consist of repeating tasks of a similar feel. The tasks are often characterized by respondents as complicated an annoying. | While the whole questionnaire block is a bit longer than a conjoint exercise, it is composed of several short exercises, each of a different type and character as described on page CSDCA - Common Scale Discrete Choice Analysis. The fatigue from interviewing can be lower. |

| A calibration exercise may be used to transform relative CBC-based utilities to reflect the stated intentions. | A calibration is inherent to non-compensatory modeling. |

The model was tested on a financial product – a bond – with three attributes:

| Attribute | Levels | Attribute type | |

|---|---|---|---|

| 1. | Bond issuer | A, B, C, D | nominal |

| 2. | Annual interest rate | 1.5, 2, 2.5, 3, 3.5% p.a. | ordinal |

| 3. | Bond maturity | 3, 4, 5 years | nominal |

The questions were asked in two blocks.

As aside

As aside

As aside

As aside

Of the 1231 recruited respondents, 927 expressed potential interest in the product and answered questions from both blocks of the questionnaire. The average interviewing time was 4.5 min.

The web questionnaire was created in Sawtooth SSI ver. 8.4.8 system. Data was processed using Sawtooth CBC/HB 5.5.4 and IBM SPSS Rel. 23 software. The simulator was written in MS Excel 2007.

As aside

As aside

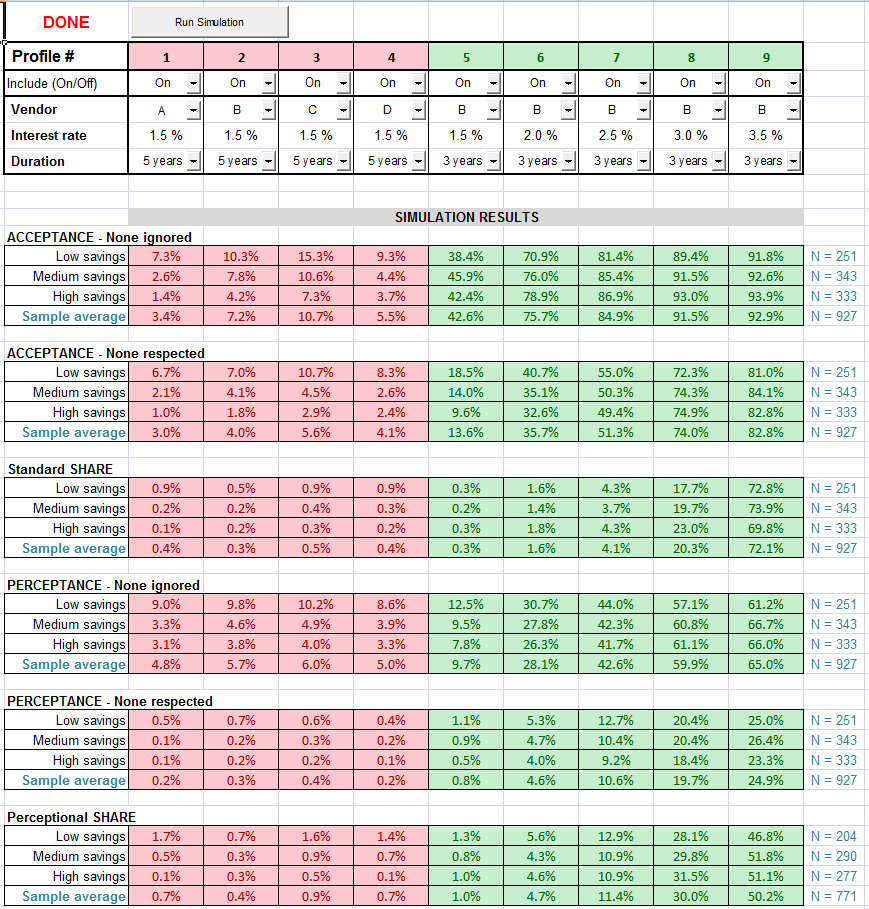

Below is a picture of a preferential simulator output. It contains 3

groups of results for the standard compensatory (additive) simulation

model, each group having 4 lines, and 3 analogous groups for the

perceptional non-compensatory model. The first 3 lines in a group are for

3 segments of respondents categorized by their savings. The 4-th line

shows the results for the whole sample.

The simulated profiles make two groups. The first four profiles, tinged

with red, are created for the four bond issuers. All have the least

favorable interest rate of 1.5% p.a., and repayment period of 5 years. The

second five-member group of profiles, tinged with green, is made up of

profiles for each of the five levels of interest. All have the preferred

issuer C, and the most popular maturity of 3 years. The selection of

profiles defined this way guarantees covering the whole acceptability

range of profiles.

Acceptance is a conventional characteristic in the interval (0%, 100%)

spanning a range from definitely unacceptable to definitely

acceptable profile. As specification of the reference profile is unknown,

so is meaning of its value. The actual value is influenced by the span of

attributes and, through it, by the environment created by the other

profiles shown in the interview. In contrast, perceptance is a measure

based on acceptability of levels stated by respondents. It is positive for

profiles, which have all the attributes above the threshold value. In all

other cases, it is zero.

The shown values are the probabilities of choice from two alternatives - the profile and the constant alternative "None", i.e. probabilities of accepting the profile when availability of some other profiles is known to the subject. The term acceptance in this case is fully justified for both models because it corresponds to the original definition. Both probabilities are computed in a similar way. As thresholds are respected in the perceptances, the respective values are generally lower than those of standard acceptances.

For the first group of profiles, the standard acceptances not reflecting and reflecting the alternative "None" do not differ much. This result is surprising. It was expected that the standard acceptances would drop substantially when accounted for presence of the alternative "None". In contrast, this is observed with the perception-based values. It is believed that perceptance without considering the None value is more likely to reflect real behavior. In a more detailed inspection of the source data we have noticed respondents answered "None" even when profiles with all levels above the thresholds stated by them in the preceding questions were present.

As

aside

As

aside

Shares are results of applying the multinomial logit using the estimated utilities of profiles. The constant alternative "None" does not enter this formula. The total share is 100%.

The share values computed from both models for the first four-member

group of profiles are very similar. Differences are observed for the

profiles in the second five-member group of profiles.

The non-compensatory model allowed to include in preference shares 813 respondents for whom at least one of the 9 profiles was acceptable. The other 114 respondents with no willingness to accept any of the profiles could have been excluded. The conventional compensatory model lacks this property.

Our experience suggests the compensatory model is suitable for a situation when choice decision takes place in presence of products that are more or less mutually replaceable. This is typical for FMCG/CPG products in stores with a rich selection, for goods in catalogs of online stores, and the like. However, it cannot be used for prediction of satisficing, i.e. choice of a just both sufficient and satisfying product.

There are situations where the offered products are not interchangeable, are interchangeable only with difficulty or some other cost, or essentially a single product is offered. When only adoption or rejection of a product is possible, and perception prevails in making the decision, a non-compensatory model of behavior might be preferred. To build the model, the knowledge of acceptability thresholds is essential. The model may be also useful in cases where prediction of satisficing is desirable.The consideration of thresholds provides an alternative to calibration. A calibration is basically a transformation of the raw utilities obtained from the standard compensatory model. Sensitivity to all attributes is adjusted with the same multiplier thus leaving levels of different attributes incomparable. In contrast, perceptional thresholds of individual attributes are mutually independent and can indicate critical attributes and their levels.