> Conjoint Method Overview > Product Utility

Interpretation

> Conjoint Method Overview > Product Utility

Interpretation

The general notion of utility does not have a unique and unequivocal meaning as already mentioned on page DCM Preference and Utility. Conjoint utilities from a choice-based experiment are probability based indirect utilities. In the common additive model, utility of a product is modeled as sum of part-worths of the attribute levels that make the product profile. The level part-worths are estimated so that the reproduced (not quite correctly "predicted") probability of the observed choices is maximized. The ratio of choice probability of a given product to choice probability of a reference product is known as odds of the two products. Utilities in the discrete choice conjoint model are defined as natural logarithms of these odds.

As aside

As aside

Interpretation of a DCM-type conjoint analysis generally depends on scaling of the utilities, i.e. on definition of the reference product profile and sensitivity of the measuring method. Both the values can be modified in a calibration procedure.

A within-subject comparison of profile utilities is not without a reservation. A utility reflects an attitude to the profile with respect to a hypothetical reference profile with zero utility. The actual behavior of an individual depends on the situation. In discrete choice modeling based on raw utilities it is only the actual choice-set that predicts the behavior given a choice will happen. This is not always a realistic condition assumption. So that utility articulates the personal attitudes and factors related to some situation, it must be calibrated. In addition, a different situation might require a different set of utilities.

In case of an aggregate model (a single utility value for a product and a group of individuals) the interpretation of the utility differences is often obscured by the behavioral inhomogeneity in the sample. This is due to the fact that preferences of individuals have too often asymmetric and/or heavy-tailed distribution, and projection of the respective utilities into observable behavior is strongly non-linear. Averaging individual utilities may lead to erroneous conclusions as is demonstrated on page What-if Simulations. There is a clear need for derived individual-based measures that can be averaged over the sample.

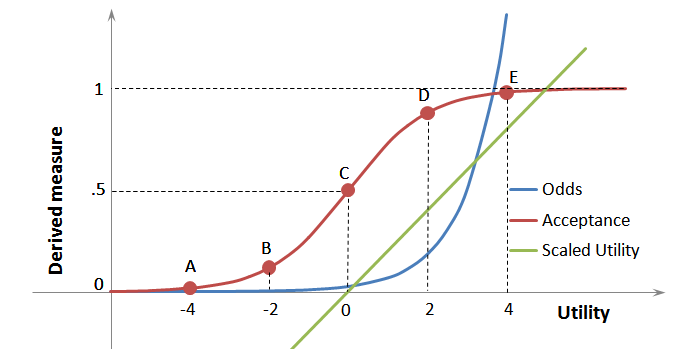

The transformation of raw utilities used in utility calibration procedure is usually linear (the green straight line in the picture below). Raw utilities are shifted so that the zero value (point C) represents an indefinite intention or a neutral statement such as "cannot decide", stated purchase likelihood of "50%", etc. The value of the slope can be derived either from stated percentages using the logit formula, hierarchical Bayes regression, or by a convention.

There are many suggestions for an "Intent Translation Model" in the literature. If the calibration is based on the symmetrical five-point Likert scale, point A may represent the statement "definitely no" and point E the statement "definitely yes" in respect to some event. Intermediate points may be used, e.g. point D for "probably yes" and point point B for "probably no". It seems the differences between various intent translation models come both from sensitivity differences in different categories of goods, and cultural or social influences.

A straightforward interpretation can be obtained by reconstruction of the observed odds (the blue curve in the picture below) from the collected data or estimated utilities. The attitude to the reference product must be known. The odds, computed as exponential (antilogarithm) of the difference between the utilities of the two products, will tell how many more times the simulated product will be chosen relative to the referenced one. Odds of any two products the same utility distance apart are equal. As the distance grows, odds of the "better" product grows exponentially and, therefore, with a steeper slope. This reflects the known fact that the effect of the same difference in utility is more pronounced for an product with a higher utility. In other words, an improvement of a better product is more effective than that of a worse one provided a choice is made from a set with both the products present and satisficing not being in effect.

The measure known as acceptance (the brownish-red curve in the picture) is defined on the interval from 0 to 1 (or 0 to 100%) as a probability of the given product to be chosen from a hypothetical pair with a "neutral" reference product with utility equal to zero. Selection of the reference product is crucial. In case of raw utilities, the utility zero value is dependent on estimation settings. If effect coding of all levels of all attributes has been used the reference product is a hypothetical product in the middle of the interviewed product range. A better approach is to use a constant alternative as a referential product. Averaged acceptances based on raw utilities may give a distorted view of preferences. Raw utilities should be used only in procedures such as preference share simulation that is independent from definition of the reference product.

If acceptance is computed from calibrated utilities based on statements of respondents it is termed stated acceptance. All products to the left of the point A inclusive can be assumed as unacceptable. All products to the right of the point E inclusive can be assumed as definitely acceptable, with all acceptances being in respect to some reference product (or products) the respondent had on mind at the moment of providing the statements. The measurable changes in stated acceptance can be identified only in the region between these two points. Calibration usually leads to utility values several logit units lower than raw utilities. Calibration scaling can both increase or decrease steepness, that is the slope of the acceptance tangent at the neutral point C.

The stated acceptance reflects propensity of the subject to the event indicated in the calibration question. The change in the acceptance brought about by a change of the underlying utility will differ according to the starting value of the acceptance. Acceptance of a nearly fully accepted product D (statement "rather yes") will change less than that of the product C with an indecisive attitude to it with the acceptance value 0.5 (50 %). The highest sensitivity is found at this point of indifference. E.g. the utility value of 4 logit units (point E) corresponds to an acceptance of 98.20 % and the value of 8 logit units to 99.97 %. Both the values signal definitely acceptable products for the individual with no practical difference between their acceptance. However, in the competitive scenario, the second product will get exp(8)/exp(4) = 7.34-times higher choice probability than the first one. If the second product were absent from the real choice set, the actual behavior of an individual would depend on awareness of the second product, and on satisficing, if relevant. A possible uncertainty in interpretation of stated acceptance has led us to the definition of a measure we call potential (see below).

The shapes of the three common utility-based measures are clearly different. Their mutual position and scaling may change due to calibration transformations that are important for the correct interpretation of the analysis. This suggests the importance of both utility calibrations and what-if simulations that can provide multiple product and scenario characteristics.

A new product must reach some acceptance so that it is marketable. However, a seemingly high average acceptance of the product does not guarantee it will be successful in terms of its market sales. There are many well-established products with varying acceptance among individuals. So that the new product overcomes the initiation barriers of the introduction, it should have some distinct advantage at least for some target.

It is well known that respondents are too much "willing" to the products presented in an interview. The percentage of customers whose stated acceptance of the product in terms of a possible purchase is comparable to or better than for the currently accepted product(s), is the competitive potential of the product. To estimate the percentage, the following procedure, based on Thurstone's theory of comparative judgment, has been adopted.

Probability of a success of the product in the competitive arrangement is estimated as probability of the choice from a binary set made of the considered product and a reference entity that can be either of the following:

The threshold value (critical choice probability) for an individual to accept a product is clearly 50% or higher. Either the average probability of "successful crossings" or the percentage of those who cross this or a higher threshold value, can be used for estimation of the potential. The latter value is clearly lower but is supposed to be more credible. Its estimation requires a sufficient size of the sample.

Estimation of a stated potential requires the tested set of products is representing a significant portion of the market. The main advantage is a calibration is not required. A disadvantage is that the full awareness of the product is assumed and projected into the estimated potential.

An example is available on page Market Potential Example. An additional view can be obtained from a what-if simulation with respect to a number of competing products.

The approach of competitive potential is useful when utilities have been estimated using the compensatory model

with additive kernel. With

introduction of non-compensatory

modeling, an alternative way to express potential is estimation of perceptances (perceptional

acceptances) based on perceptional thresholds.

Utility of a product is estimated by summing level part-worth of the attributes that make the product. It is quite natural that the percentage the attribute part-worths are spanning in the sum of spans for all attributes, can be assigned a relative importance of the attribute. This approach was introduced in the early development of conjoint models with binary attributes. Only later it was extended to attributes with multiple nominal levels that are mutual substitutes. Since application of this approach was simple it happened to be used also for attributes with multiple quantitative levels. The original requirement for substitutability of the levels has been silently ignored. This has some unavoidable consequences.

Comparison of importance of attributes of different type, range and/or number of levels is problematic. If attributes are quantitative, their comparison is possible using estimates of partial elasticity of substitution.

In contrast to price elasticity usually related to the gross price of a product, partial elasticity of substitution can be related to any ratio scaled variable characterizing the product. All other variables are assumed to be constant.

Individual elasticities have usually very broad and heavy tailing distribution in the sample. In addition to the sample heterogeneity it is due to the part-worth estimation method. In a range of attribute values where choices were either too frequent (on the account of other ranges) or too rare the precision is decreased. The stabilization function of an estimation algorithm will level off part-worths of neighboring levels while the optimizing function will set them far apart. HB procedure involves both the effects. Some computed elasticities of substitution are therefore close to zero and another are unrealistically big. The described features can be observed in the example on page Elasticity of Substitution Example.

The problems mentioned above can be avoided by resigning on any predefined utility model in estimation of the conventional (i.e. aggregate share-based) elasticity of a quantitative attribute. An algorithm has been developed that puts an appropriate weight to each estimated elasticity value for an individual so that the influence of potentially imprecise and/or biased values is eliminated. This approach has proved useful in estimation of aggregated price elasticity and related properties of FMCG/CPG products. Unfortunately it is applicable only to simple brand-price problems where choice probabilities are estimable with a sufficient reliability.

As product utility for an individual is not additive over the sample, some other measures have to be used. Use of measures based on individual utilities has advantage in that the sample segment for an aggregation can be defined at the interpretation time.

Of the derived measures for individuals mentioned above, only acceptances can be averaged provided the acceptances express some probabilities. Averaging acceptances based on raw utilities for individuals is not a good idea as the utility reference is arbitrary for each individual, and so are the computed acceptances.

In terms of calibrated utilities, averaged stated acceptance is assigned as market acceptance. It is the probability of accepting a product as electable by the targets. It may be understood as the stated choice probability of the product on a binary market where a hypothetical reference product is defined as having zero calibrated utility. In the simplified monopolistic situation of just a single product present on the market, it is equal to the stated probability of the choice at the decision time. The outcome "not to buy" has the utility of the implicit "outer product" that has zero utility and represents the value of satisfaction from purchase of some other product(s) the purchaser is aware of. The decision probability threshold value for estimation of the product potential is clearly 1/2 (50%) in this case (the product vs. "something else").

The situation is much more complicated on the market with at least one competing product. Beside using an appropriate variant of a what-if simulation, a measure we call competitive potential can be used (see below).

The probability of buying a product given it was bought in the previous purchase can be called loyalty. In a conjoint environment, loyalty can be defined as a conditional choice of a product given it has been chosen in the previous task provided it is available in the current task. The individuals who do not accept the brand at all do not influence the loyalty as there is no choice to be repeated.

There is no information on probability of repeated choice available from a standard conjoint exercise. Therefore we take the repeated choice probability proportional to the estimated choice probability. At the same time, loyalty means an increased resistance against price increase. We therefore weight the contribution to brand loyalty of each individual by their immunity to price changes. This modification, while rather heuristic than theoretical, gives a better discrimination among brands. An example of brand loyalty is on the page Price Part-worth Interpretation.

The results from a conjoint study can be obtained in several presentation formats the view of which can seemingly differ. One has to be aware that different presentation of the same data cannot imply different conclusions. Every presentation method should be designed and used in accordance with the goals of the study. Evidently, the formats that are consistent with the managerial intents should get the highest priority.

It is not uncommon that some results appear to be "surprising", "unexpected" or "inconsistent". A tendency to silently obscure, hide or even reject them would be a bad strategy. If the study has been carefully designed in close cooperation with the client, is robust in all possible aspects, and a substantial part of the results is reasonable, the "strange" results may signal either threat or opportunity and deserve a higher attention than those suggesting "everything as expected". A round-table discussion of the results is always useful.